The last reliable (available) path into AI

← HomeIt seems like every undergrad or graduate school applicant wants to work in artificial intelligence. Though, most people will be throwing their solid applications off to be ignored by only applying to only the best AI labs. I get it, people want to work on AI all the time, but you can still be an AI person if you bring the AI approach to something else. It's an easier path to admission when realize you're passionate about [insert non AI field] and then bring AI into your lab.

Context & my terminology

Throughout this article, I refer to a "pure AI" lab as one predominantly known for their work in AI/ML. While yes Sergey & Pieter work in AI + robotics (something else), the most accessible opportunities are in something further out, where one brings AI in to something new (e.g. physics, energy, analog circuits). Many of my best friends in AI started out in something totally different and made it work. Now, I'm starting to think this path is the most reproducible one in the current AI research landscape.

My hypothesis is: What people should be doing is finding something else that interests them, and applying to do AI + something. This is actually the TL;DR of the article -- apply to an applications group using AI as a tool and your odds of acceptance will increase by 10x or more -- so you can read on if you want to learn how UC Berkeley EECS and Berkeley AI Research (BAIR) got to this point. This post summarizes trends that are happening at all the top CS schools, but I have a much easier time drawing the lines by telling the story I am experiencing.

What counts as "pure AI"?

I know I'm really making this article a little hard to read by tossing around terms like pure AI when describing research groups that are doing a lot of applications to AI. I'm trying to distinguish those groups that are known for their work in AI versus those groups that are excellent at another application and may try AI.

Are you considering applying to UC Berkeley? I'll use this as an example. Consider three professors: Sergey Levine, Claire Tomlin, and Murat Arcak. All of these professors work in fields underpinning recent successes of AI: optimization, robotics, control, etc. How will the number of applicants to each of these groups at Berkeley vary? I would guess that Levine > Tomlin >> Arcak (where each > is an order of magnitude). Though, any savvy student at these groups will have access to the same amazing colleagues and work at Berkeley. If you're worried about getting in, find some "less hyped" professors that match up with problem spaces you're excited about.

For what it's worth, I think most professors listed on the BAIR website are going to get more mentions in their applications. BAIR has an independent admission process, which is definitely more competitive. Before you put all your eggs in that basket, ask people for realistic advice on whether or not you'll get in.

The Evolution of Berkeley EECS: The BAIR Takeover

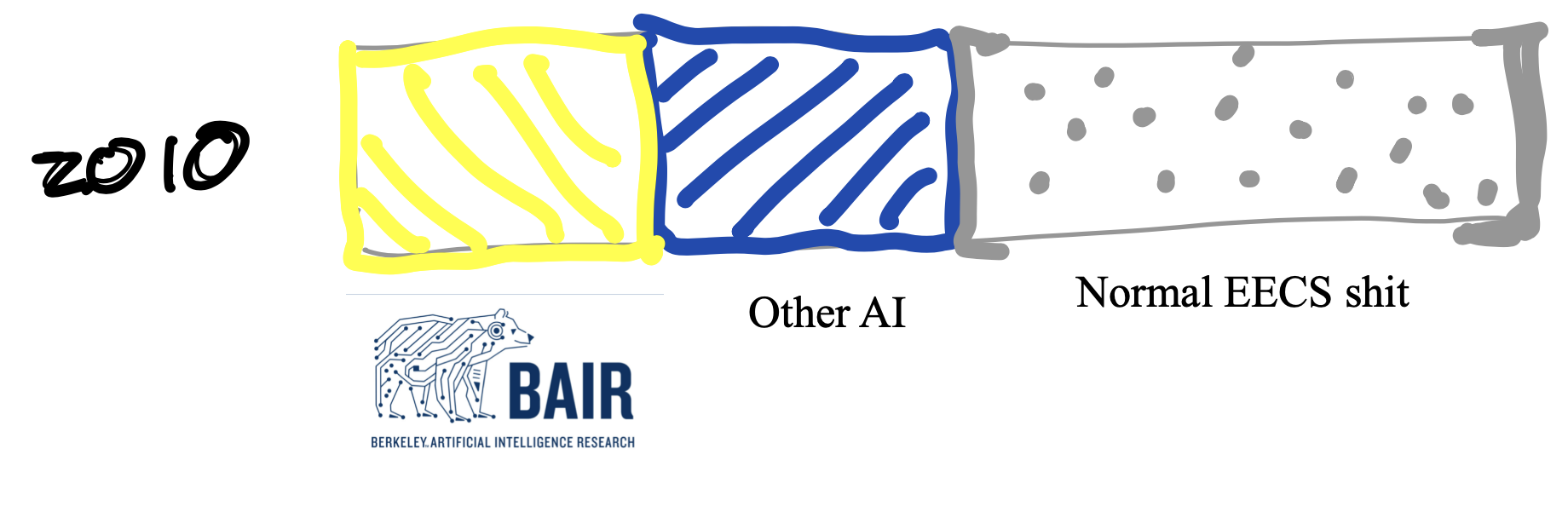

2010

In 2010, the deep learning revolution had not happened (BAIR technically did not exist to my knowledge, but many of the faculty were here doing their thing), and AI researchers and applications of AI had some much smaller minority of the headcount in the department. Consider this drawing below, which is not to scale (there was less AI — like 10-20% in total including applications). Non-AI-identifying was certainly the majority.

The landscape has really changed in a decade. BAIR lists 63 Faculty, 420 post docs and phd students (lol), and 31 master’s students on their website (Note, some of the members listed do not have EECS affiliations, its messy). This is quite the colonization. BAIR lays claim to a large swathe of the faculty and students, many of whom aren't necessarily focused on AI. I count myself as one of the claimed! I’m listed as a BAIR student without an advisor 🤔. Other professors are listed on the BAIR site, yet they regularly spin counter-AI narratives and think some of BAIR’s practices and BAIR’s grandiosity are problematic. Differing opinions — The Berkeley Way.

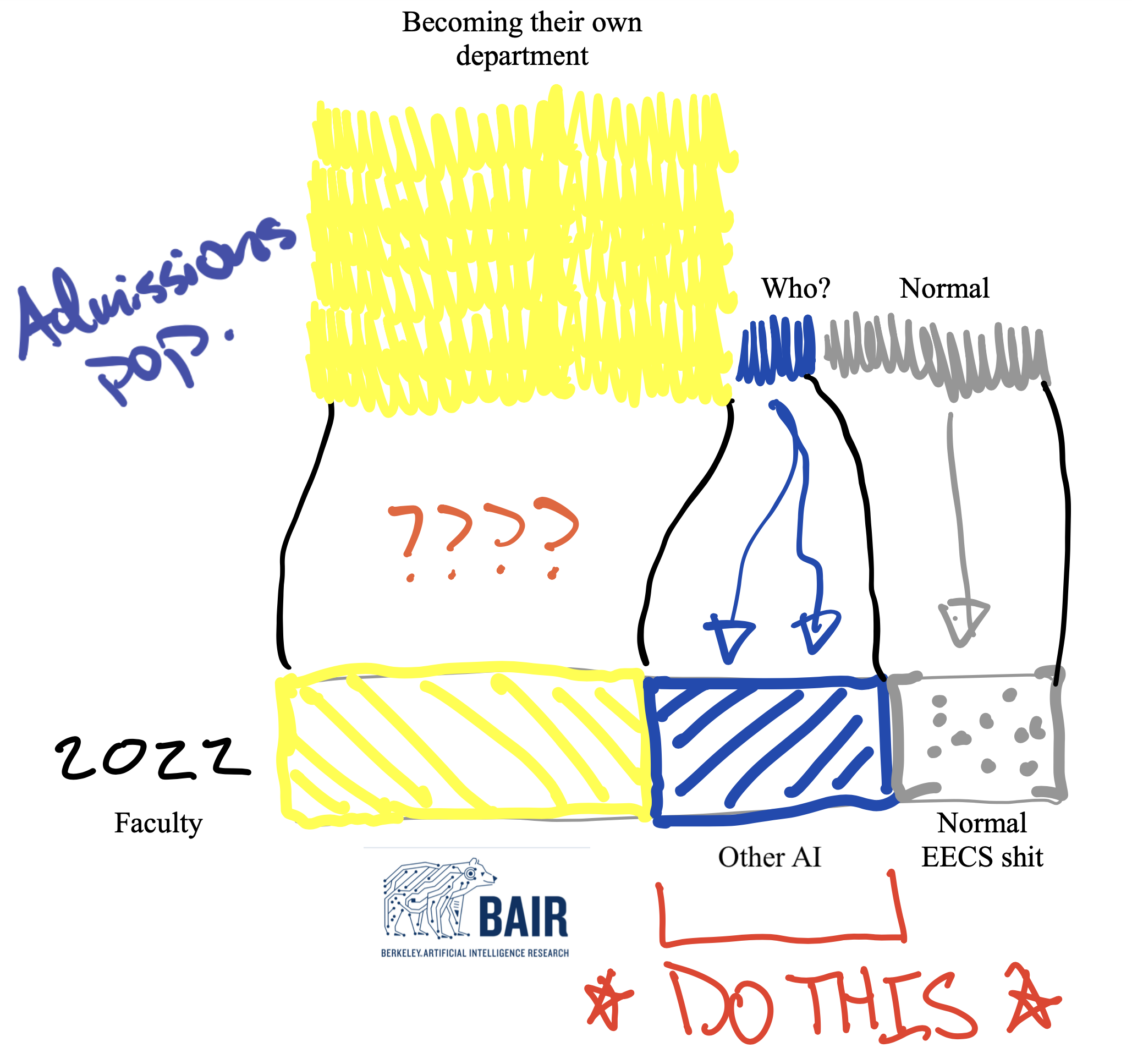

To put the BAIR numbers in perspective, the EECS department lists approximately 190 faculty, 730 graduate students and 3450 undergrads on their website. This puts BAIR at about 30% of the faculty and 50% of the students (astonishing). Adjustment to the first figure is pictured below. The EECS listing has a ton of extra interesting demographics and trends, check it out.

2022

How do most people interface with this reality? Through the application process. Don’t apply to pure AI unless you have multiple publications and still are up for a lot of uncertainty. I heard from a reasonably big BAIR group that the collective of BAIR professors will have about applications from 200 top kids and only accept 40. Thousand(s) were likely eliminated before this hat-draw.

Admissions

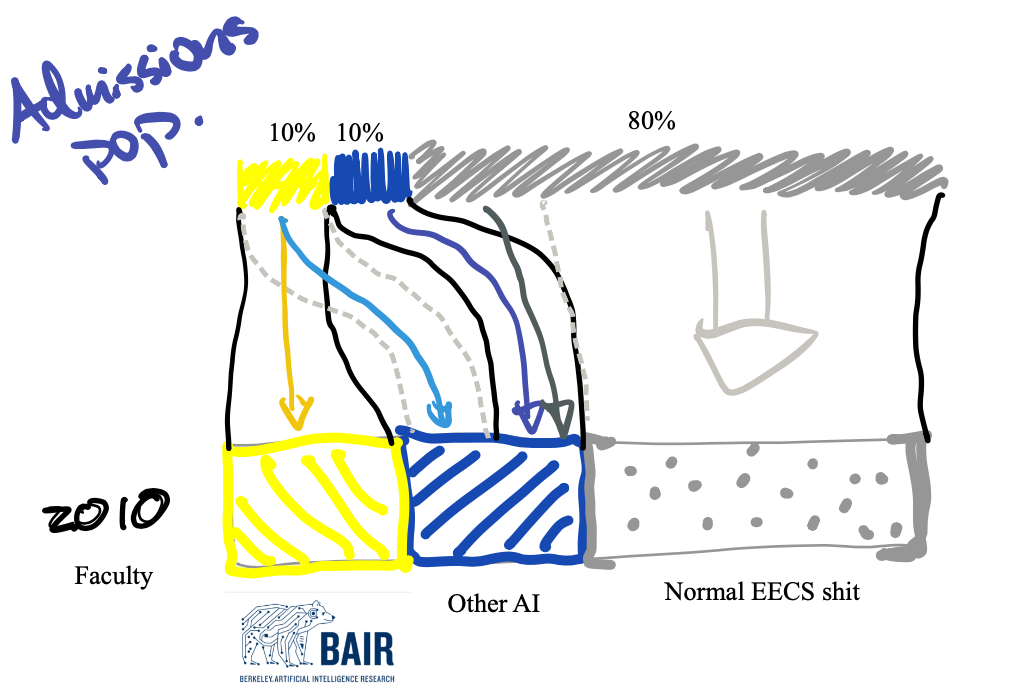

2010

If we consider the proportions of faculty I drew above, this could represent the ways faculty got students from the admissions process. Some change of subjects, but all in all it was relatively balanced.

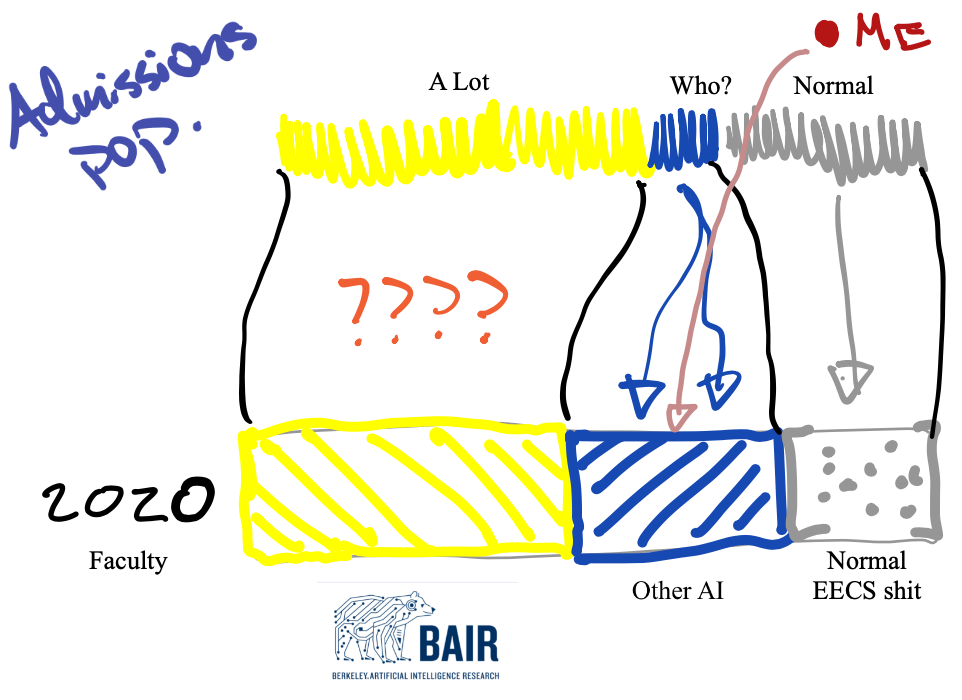

20

As I expect many people in my cohort did, I explored the option of joining a pure AI group after I was admitted and got a respectful no thank you from multiple top Berkeley professors. The landscape for who got these opportunities had almost entirely shifted. Though, because the intersections of deep learning with other research topics were, and to a large extent still are, under explored, I got to try fun things in a group not designed around AI research. This journey was largely self-motivated and opportunistic.

I went from a normal hardware EE applicant into the pool of people who are trying to figure out how machine learning works. In my first few years, I found a bunch of people doing the same. Year 2-4 of my Ph.D. I knew plenty of people working on robotics, signal processing, optimization, etc. who were not in BAIR but on a more low-key trajectory to the same end goal. The hype in BAIR is a double edged sword for work-life balance and career momentum. Working in applications of AI is generally a less intense field (largely because it is adjacent to the ICML, ICLR, NeurIPs paper-mill) so we got more time to figure out how we work and develop research/scientific fundamentals on top of understanding the numerical shithousery of deep learning.

I see the proportion of admission cases being cognizant of this middle option shrinking while the field of opportunity is still large. Join the alternate AI extravaganza!

2022 (Today on)

There is one last fold to the tale that needs to be discussed — the autonomous admission decisions and departmentalizing of BAIR. The figure above does not reflect the true proportions of the admission pool. Those interested in joining the faculty listed on BAIR’s site have only grown in an exponential-like fashion. There is now a clear delineation in application process between those BAIR-targeting students and everyone else. The realistic goal of applications should be to get into Berkeley instead of just BAIR.

In reality, when a student arrives, they can still work with the BAIR professors, they just may need to do a bit more work to do so and find a foundation in another research group. There is an advantage in a different path by being able to view problems no one else can.

Why is this the last reliable path? I think it's still hard to execute, but most of the other cards on the table are effectively impossible. I'm excited to hear from those of you who give this a shot -- I believe.

Finally, it's also worth remembering that getting a PhD is not required for most of the industry jobs anymore, but no one I talk to knows a repeatable path to getting them without the credential. You had to be earlier to get in without a Ph.D. Personally, I don't want to rely on luck in getting to where I want to be. I wanted to let everyone know you don't need to too.